Reclaiming the right to music storytelling: how network technologies can help

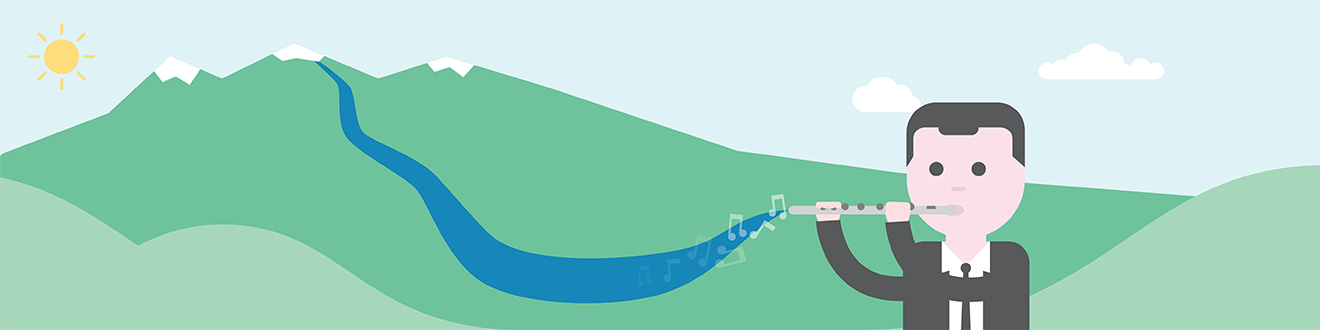

What if, instead of just looking at a seismograph, we could listen to earthquakes in the form of a live flute performance? In other words, can we make music from the oscillation of a specific place on Earth?

In a world premiere at the 2023 Internet2 Community Exchange, Dr Domenico Vicinanza, music composer and Senior Research Engagement Officer at GÉANT did just that. He mapped data in real time from the seismographic activity at Yellowstone National Park to musical notes by using a computer programme that he developed and eduMEET to connect and stream the data. The music created during the premiere was then seen for the first time and performed on the spot by Dr Alyssa Schwartz, Visiting Assistant Professor of Flute and Musicology at Fairmont State University.

How is this possible in practice?

Imagine a scientist creating a visual representation of their data, like a line on a graph reflecting a linear data increase. A musician can take this and associate each value to a note by choosing a musical scale. Once a scale is established, the map will do its magic. A melody will be formed, and it will follow exactly the original scientific data.

Now, let’s apply this to the seismographic activity at the Yellowstone National Park. Yellowstone contains the largest fraction of active geophysical phenomena on the planet, geysers and mud pots are just two of them. It covers around two million acres and is hit by up to 2,500 earthquakes per year, but the majority are too small to be felt by humans. Some earthquakes can last for months because they happen in “swarms”, one after the other.

The park’s seismographic activity is detected by a network of 50 seismographs run by the US Geological Survey. Sensors and microphones collect the data in the form of a waveform, which can then be transformed into music in several different ways. The waveform can simply be put on an empty music sheet and then notes can be added following exactly the trend of the waveform. Alternatively, the distance between the peaks can be mapped – the more distant the peaks, the slower the notes. Lastly, one can also track the position of the maximum energy in the spectrum – with an eruption, for example, representing a big peak in the data – so that the melody follows the same story as the energy release.

If the vibrations produced by the volcanic activity increase, the notes will rise up too. When the seismographic data shows dramatic oscillations, so will the melody. The more complex the data is, the more interesting the story told.

Is this idea new?

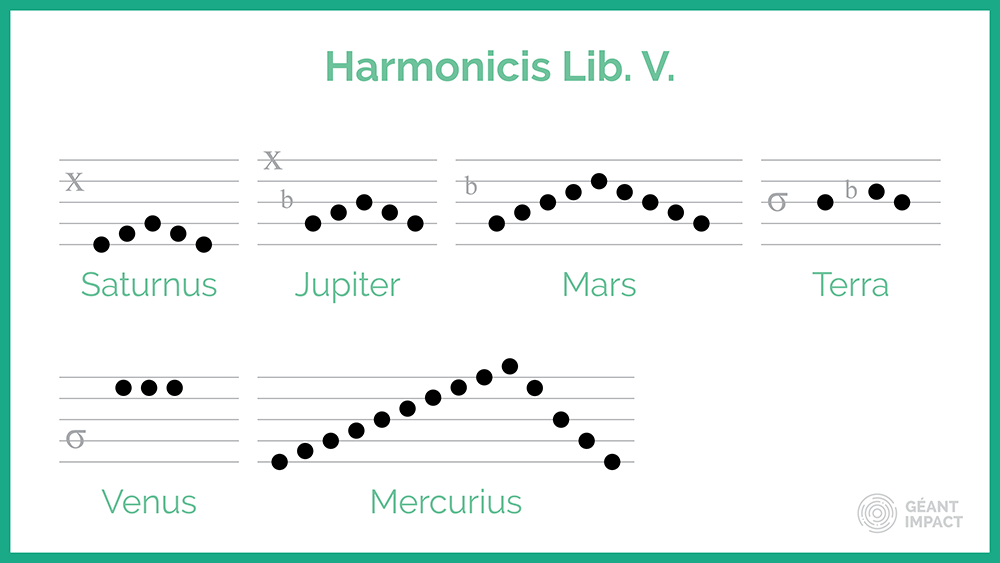

No, it is actually more than 400 years old! In 1619, the German astronomer, Johannes Kepler, wrote “The Harmony of the World”, where he confuted the belief that planets move around the sun in circles, but instead they are drawing an ellipse, changing their velocity. He communicated his discovery with mathematical drawings and fragments of music, each of them being the musical equivalent of the speed of the planet around the sun. The faster the planet, the faster the music.

This is also not the first time that Dr Vicinanza has recreated concert music from scientific data – read about the Mars soundscapes project. Nature is a wonderful reminder that we cannot have music without science.

From science to music

The software used for the live demonstration to turn data into music is the result of more than 15 years of research. It works by rescaling and mapping the numerical data from the instrument to music notes. The result is a stream of notes that follow the same peaks and troughs of the seismic wave, a melody that would move up and down, as if it were tracking the movement of the needle on the seismograph.

But the data turned into melodies needs to be interpreted, and interpreting movement in a musical way when there is not expression is a huge artistic challenge. In their live performance, Dr Vicinanza and Dr Schwartz were at the mercy of nature. They did not know what to expect and what natural phenomena would be detected at that moment.

If there is significant seismic activity and big spikes in the data streamed, then the music will be incredibly dramatic. Or, on the contrary, it could be quite serene. It is up to the musicians to interpret the piece of music created, using speed, articulation, and making certain parts softer or louder. This use of external, uncontrolled data adds a layer of unpredictability to the music making each piece totally unique and also intimately linked to nature. At the live premiere, Dr Schwartz saw the melody on eduMEET for the first time alongside the audience and then played it.

Our ears are good at identifying patterns, making it easier to spot anomalies, sudden changes and regularities in data - by hearing it. In addition, it opens new ways to access information for sight-impaired researchers, as they could hear the data rather than see it.

The role of GÉANT network technologies

eduMEET, the open-source video conferencing platform developed by and for the European research and education networking community to address the need for their own video conferencing service, played a crucial role in making the live performance happen. The platform was used to connect and stream the data retrieved from the volcanic activity.

Its purpose has now expanded, and it has opened interesting opportunities for remote artistic rehearsals or joint remote performances thanks to its capability to support codecs enabling high video quality (up to 4K resolution) and advanced Hi-Fi audio mode. eduMEET can be used to create broadcast standard content (with 16:9 mode) with the ability to handle multiple cameras from a single participant which provide opportunities for new and innovative approaches to remote collaborations. eduMEET is virtually unique in its support of these high performance codecs which are usually limited to commercial broadcast grade software.

eduMEET also started collaborating with The Commons Conservancy to meet the goal of becoming an independent open-source software by 2024.

Learn more about eduMEET and how it is enabling the research and education community.

What does GÉANT offer?

The research GÉANT enables touches almost every aspect of our lives. Not only that, but its networking technology is shaping the internet of tomorrow.